We are pleased to introduce our groundbreaking software designed to advance facial symmetry analysis through the sophisticated numerical evaluation of Facial Palsy. Our program provides a multi-faceted analysis using both still images and videos, allowing users to assess and compare symmetry before and after surgeries or rehabilitation sessions.

For still images, the software uses Asymmetry Score and Cosine Score for accurate symmetry analysis. These metrics allow for a detailed evaluation of facial symmetry. For videos, the program analyzes Motion Task Measurements to provide additional useful information regarding dynamic facial movements.

Numerical Analysis

The software computes two core metrics—Asymmetry Score and Cosine Score—for quantitative assessment of facial asymmetry from visual input. For each of three motion tasks (smile, eye closure, and eyebrow raise), left and right facial movements are measured independently to quantify the degree of asymmetry.

Asymmetry Score

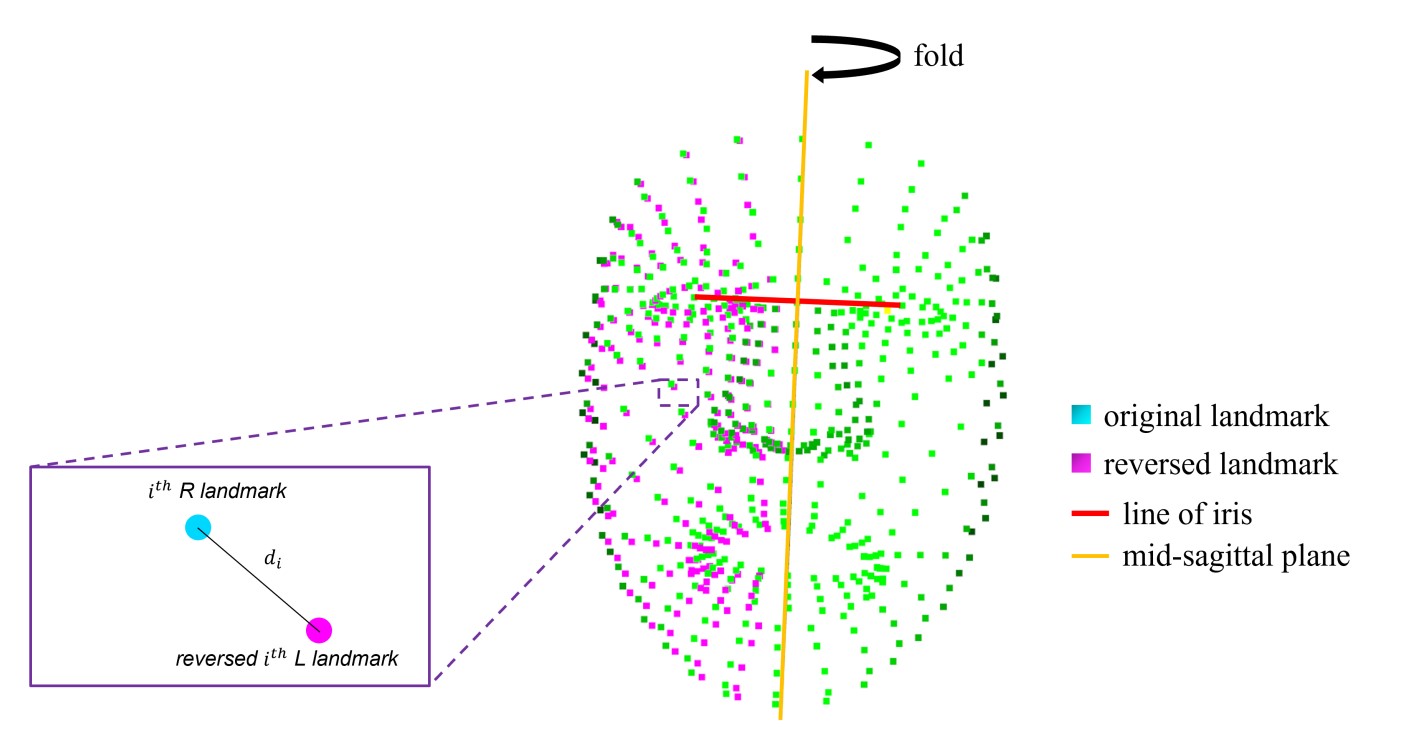

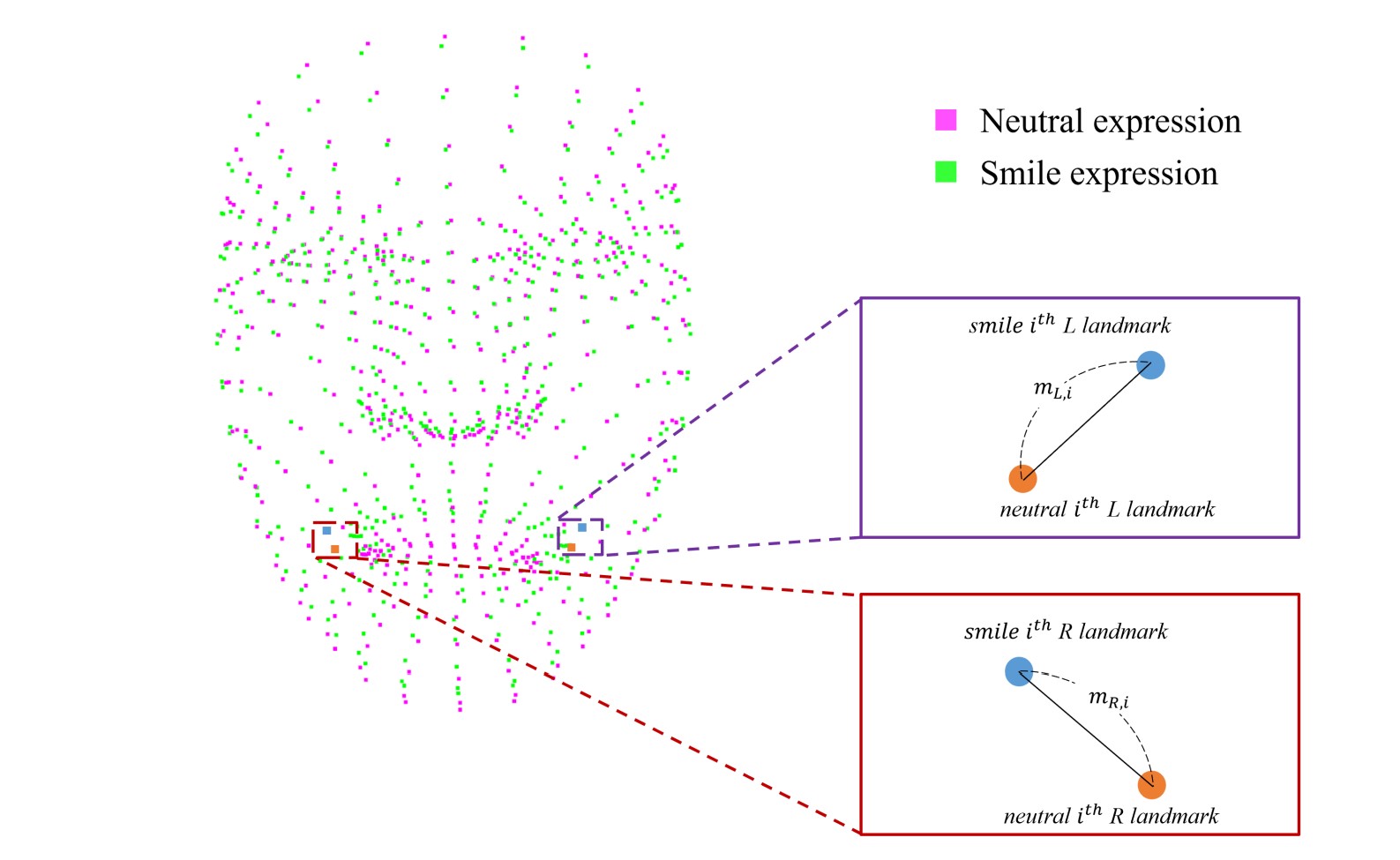

The Asymmetry Score evaluates the difference in movement magnitude between the left and right sides. For each motion task, left (L) and right (R) movement magnitudes are measured using task-specific methods, and the Asymmetry Index is computed. An activation gate is then applied as a correction factor that indicates whether a sufficient amount of movement was observed in the region of interest; when movement is too small for reliable measurement, the gate suppresses the influence of asymmetry on the score. The Asymmetry Index and final score are defined as follows:

Cosine Score

The Cosine Score evaluates the directional similarity of left and right movements. From the peak motion interval, the cosine similarity and magnitude ratio of the left and right displacement vectors are combined into a single measure. While the Asymmetry Score compares "how much each side moved," the Cosine Score compares "how each side moved." The Cosine Index and final score are defined as follows:

Motion Task Measurements

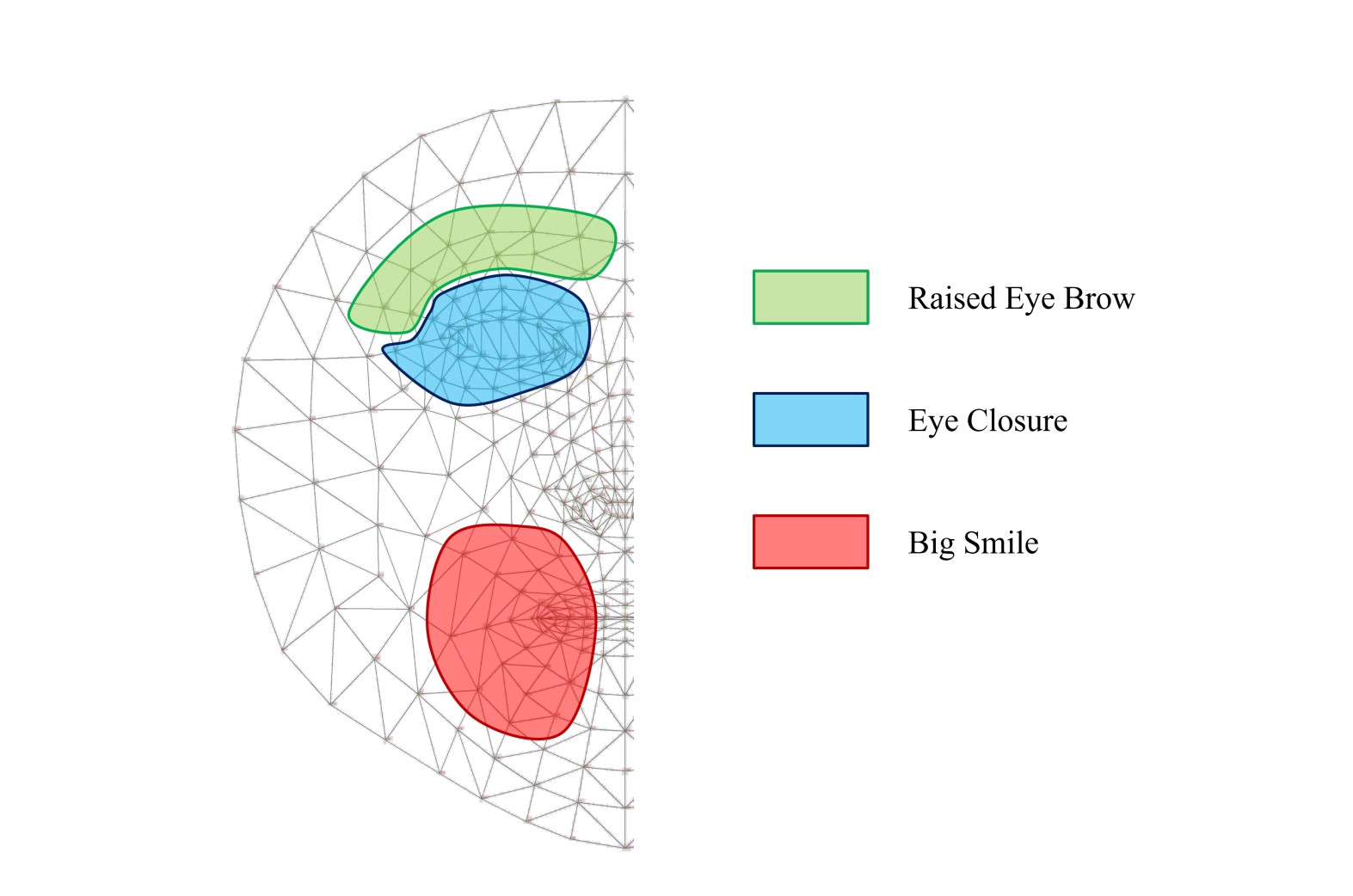

The primary measurement method varies by motion task. For smile, commissure excursion of the mouth corner landmark group is measured. For eye closure (blinking motion), the closure fraction is computed based on changes in the polygon area of the eye contour. For eyebrow raise (eyebrow motion), the vertical displacement (brow Y displacement) of the eyebrow landmarks is measured. The landmark regions referenced for each motion task are illustrated in the figure.

Deep Learning Analysis

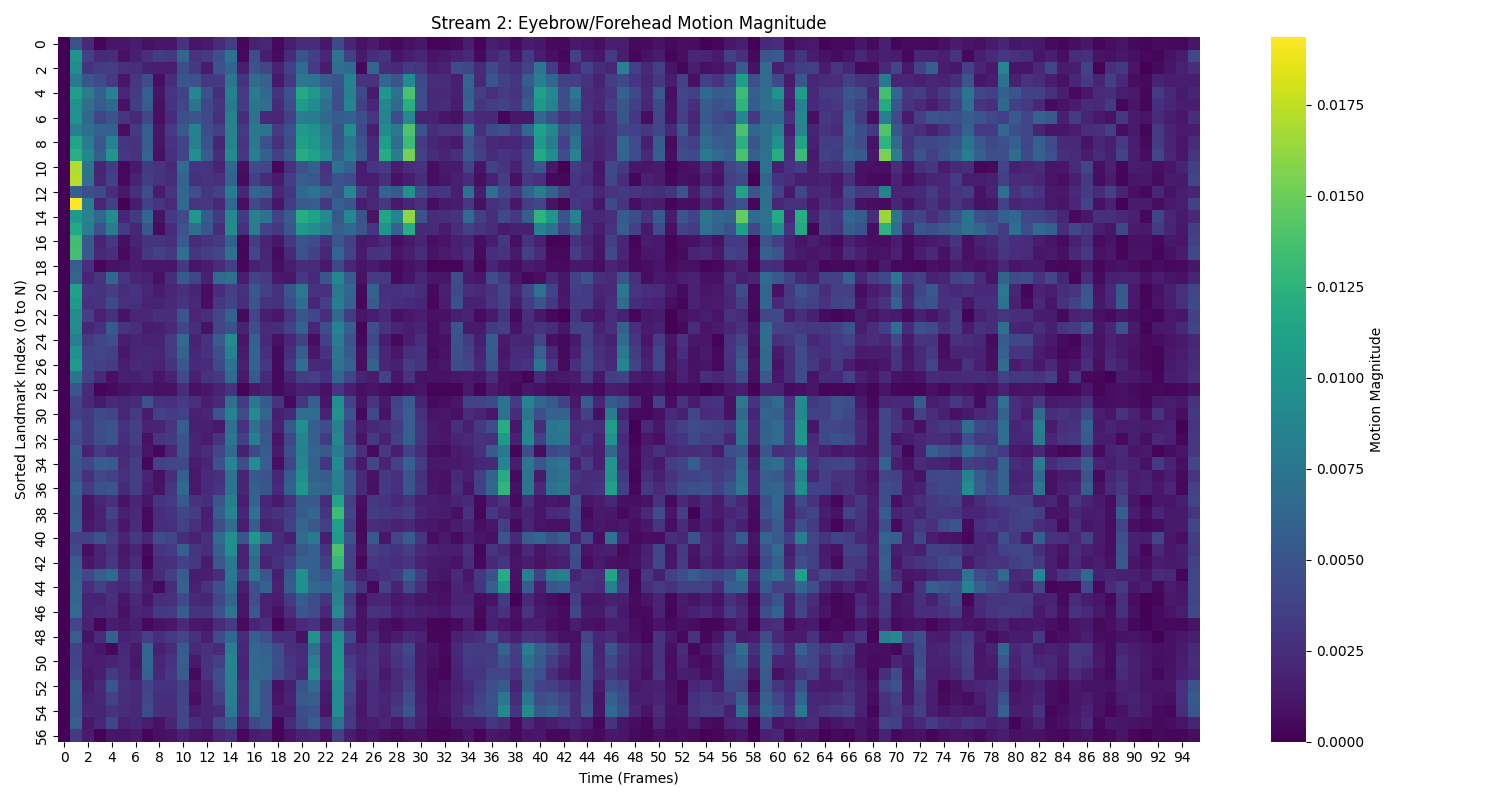

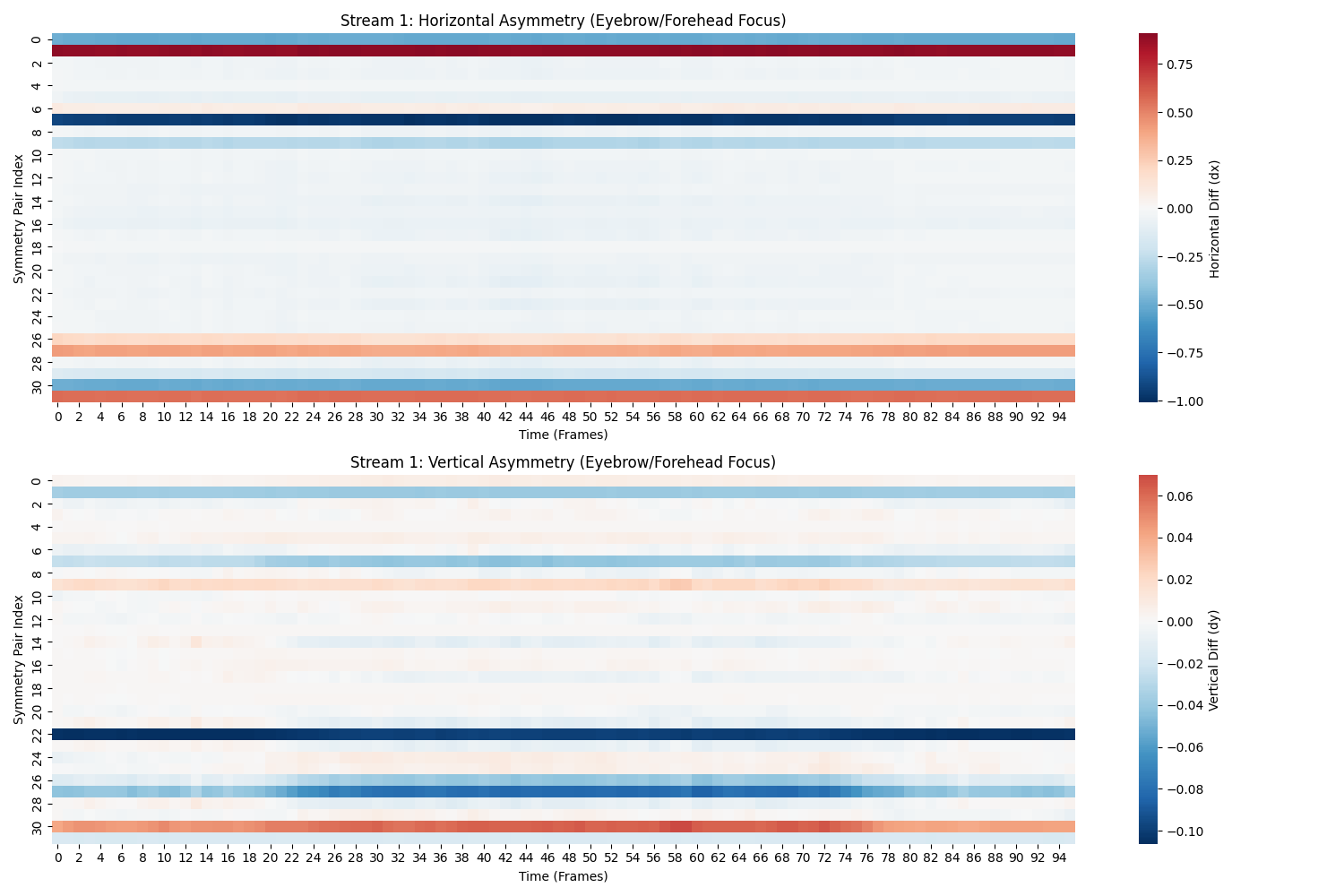

Deep Learning Based Dynamic Expression Assesment: Our facial palsy diagnostic support system uses a landmark-based two-stream deep learning model to analyze asymmetric motion in the eyebrows, forehead, and periocular regions from facial videos. First, each input video is face-centered and cropped, and the sequence length is normalized to a fixed duration (96 frames). MediaPipe FaceMesh is then used to extract 478 facial landmarks per frame. The landmarks are normalized using the nose tip and inter-eye distance to reduce the effects of head position and camera scale. From these normalized landmarks, the model constructs a symmetry map (dx, dy) using left-right paired landmark regions and a motion sequence using frame-to-frame displacement of selected eyebrow/forehead/eye landmarks. The symmetry map is processed by an SE-Residual CNN to learn spatial asymmetry patterns, while the motion sequence is analyzed by a BiLSTM with attention to capture temporal dynamics and emphasize clinically important frames. Finally, the two feature streams are fused to classify facial palsy-related grades (classes), enabling quantitative assessment of upper-face dysfunction across facial expression tasks. The landmark regions used for each expression analysis are illustrated in the figure.

With this innovative software, healthcare professionals, researchers, and patients can gain a deeper understanding of facial symmetry, monitor progress over time, and make well-informed decisions about treatment and rehabilitation. Discover the capabilities of our facial symmetry analysis software today and explore new possibilities in Facial Palsy research and patient care.

Development Team

This software is the product of a multidisciplinary collaboration, combining the expertise of medical professionals and technical researchers to provide a solution for facial symmetry analysis.

Medical Professionals from Asan Medical Center, Seoul, Korea

- Tae Suk Oh

- Young Chul Kim

Core Developers from Kwangwoon University, Seoul, Korea

- Soon Chul Kwon

- Jae Seung Kim

- Kim Mu Jung

- Seong Chan Park

- Jin Bin Kim

- Jun Sik Kim

- Yu Seong Lee

Advisor from Kyung Hee University Medical Center, Seoul, Korea

- Sang Hoon Kim (Otorhinolaryngology)

Development Environment:

- OS: Windows 11 Pro for Workstations

- CPU: AMD Ryzen 7 7800x3D

- RAM: 64 GB

- GPU: NVIDIA GeForce RTX 3090

Licenses:

Our software is released under the GPL v3 (GNU General Public License, version 3) and the Apache License 2.0. Here are the corresponding statements:

- GPL v3 License: The software follows the GNU General Public License, version 3 (GPL v3). This license is a free software license that grants rights to copy, distribute, modify, and use the software. If you choose to use this software under the terms of the GPL v3, you must comply with the conditions stated in the license. The full text of the GPL v3 license can be found on the GNU website.

- Apache License 2.0: The software is licensed under the Apache License, version 2.0. This license is an open-source license that grants rights for both commercial and non-commercial use. If you choose to use this software under the terms of the Apache License 2.0, you must comply with the conditions stated in the license. The full text of the Apache License 2.0 can be found on the Apache Software Foundation (ASF) website.

Therefore, the use, reproduction, distribution, and modification of this software should adhere to the provisions of both the GPL v3 and Apache License 2.0. This allows for the freedom to use and improve the software.